When technology fails, businesses come to a standstill. Addressing systemic errors promptly or preventing them altogether is essential for minimizing losses during downtime. Investing in network and server monitoring is a smart start, but first, it's critical to understand our heavy reliance on technology and the immense financial toll of disruptions.

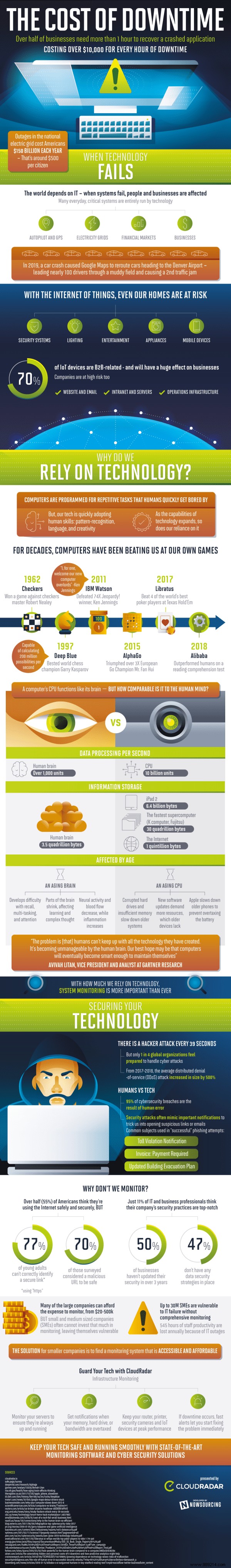

On average, most companies take more than an hour to recover a crashed application, with each hour of downtime costing over $10,000. Nationwide power grid blackouts alone cost Americans $150 billion annually—about $500 per citizen.

In today's digital world, information technology powers nearly everything we depend on daily, from airplane autopilots and Tesla's advanced driver-assistance systems to GPS navigation, power grids, financial markets, and core business operations.

In 2019, a car accident prompted Google Maps to reroute nearly 100 drivers toward Denver airport—through a muddy field—creating a secondary traffic jam.

Technology has permeated our homes too: security alarms, entertainment systems like TVs and gaming consoles, smart devices, tablets, mobiles, and even appliances such as dishwashers and stoves. Notably, 70% of IoT devices serve B2B applications, exposing businesses to risks in websites, emails, intranets, servers, and operational infrastructure.

We rely on computers for their ability to handle repetitive tasks that exhaust humans. Yet adoption is accelerating, with AI now enabling pattern recognition, language processing, and creative problem-solving.

Computers have outperformed humans in games for decades. In 1962, checkers champion Robert Nealey lost to a computer. IBM's Deep Blue, evaluating 200 million positions per second, defeated world chess champion Garry Kasparov in 1997. IBM's Watson dominated Jeopardy! champions 74 times in 2011, prompting winner Ken Jennings to quip: "I welcome our new computer overlords."

AlphaGo bested three-time European Go champion Fan Hui in 2015. Libratus, an AI poker program, topped four of the world's best Texas Hold'em players in 2017. Even Alibaba surpassed humans on a literacy test in 2018.

But how does a processor stack up against the human brain? The brain processes over 1,000 data units per second and stores about 3.5 quadrillion bytes. Top supercomputers like Fujitsu's K hold 30 quadrillion bytes, the internet around 1 quintillion, and an iPad 2 manages 6.4 billion.

As Gartner analyst Avivah Litan notes, "The problem is humans can't keep up with all the technology they've created. It becomes unmanageable by the human brain. Perhaps our best hope is that computers will eventually become smart enough to sustain themselves."

Aging brains struggle with memory, multitasking, and focus as regions shrink, neural activity declines, blood flow reduces, and inflammation rises—impairing learning and complex thought.

Processors age too, facing hard drive failures, memory shortages, slowdowns, and incompatibility with resource-intensive software updates. Apple has even admitted to throttling older iPhones to preserve battery life.

With a cyberattack every 39 seconds and only 1 in 4 organizations feeling equipped to respond, it's no surprise that 95% of breaches stem from human error.

See also: 5 cybersecurity training tips for employees

For small businesses, affordable, accessible monitoring systems are the answer. Safeguard your infrastructure, cut downtime costs, and deploy robust server monitoring and cybersecurity solutions.

Infographic by CloudRadar Server Monitoring