Artificial intelligence powers everything from everyday automation to self-driving cars and facial recognition. But what happens when AI inherits our human biases? Real-world failures show it's not science fiction—it's happening today.

AI excels at repetitive tasks, driving vehicles, and identifying faces. Yet its outputs are only as unbiased as the data and programming we provide—and humans carry inherent biases.

The challenge: How do we build ethical, unbiased AI?

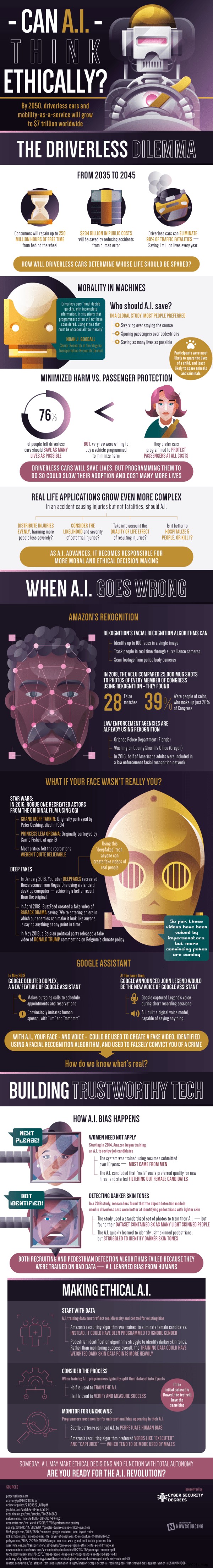

Attempts to deploy AI for routine tasks have repeatedly exposed flaws. Amazon's recruiting tool, trained on historical hires, systematically downgraded women—even penalizing resumes mentioning female references.

More alarmingly, a 2019 study revealed self-driving cars detect light-skinned pedestrians far better than dark-skinned ones, due to imbalanced training data with three times more light-skinned examples.

Facial recognition used by police raises similar concerns. While some cities ban it, places like Orlando, Florida, and Washington County, Oregon, deploy it despite flaws. The ACLU tested one system against 25,000 photos, finding 28 false matches among Congress members—39% involving people of color. It scans body cams and security footage, amplifying risks.

Ethically programmed AI could transform lives. Autonomous vehicles might save 250 million hours annually, cut $234 billion in accident costs, and reduce road fatalities by 90%—if done right.

Yet dilemmas persist, like the 'trolley problem': Only 75% agree cars should prioritize saving the most lives. Consensus favors children over criminals or animals, but few would pay extra for such programming.

As the adage goes, 'garbage in, garbage out.' To counter bias, we must first dissect human prejudices and exclude them from training data.

Techniques like oversampling underrepresented groups (e.g., darker skin tones) or gender-neutral weighting help. Vigilantly auditing inputs and outputs prevents subtle biases from scaling into systemic issues.

Upfront investment in ethical design unlocks AI's full potential. Dive deeper with the infographic below.

Are we prepared for machines making life-or-death choices?

Source: Cybersecurity Degrees